Authorisation and profiling

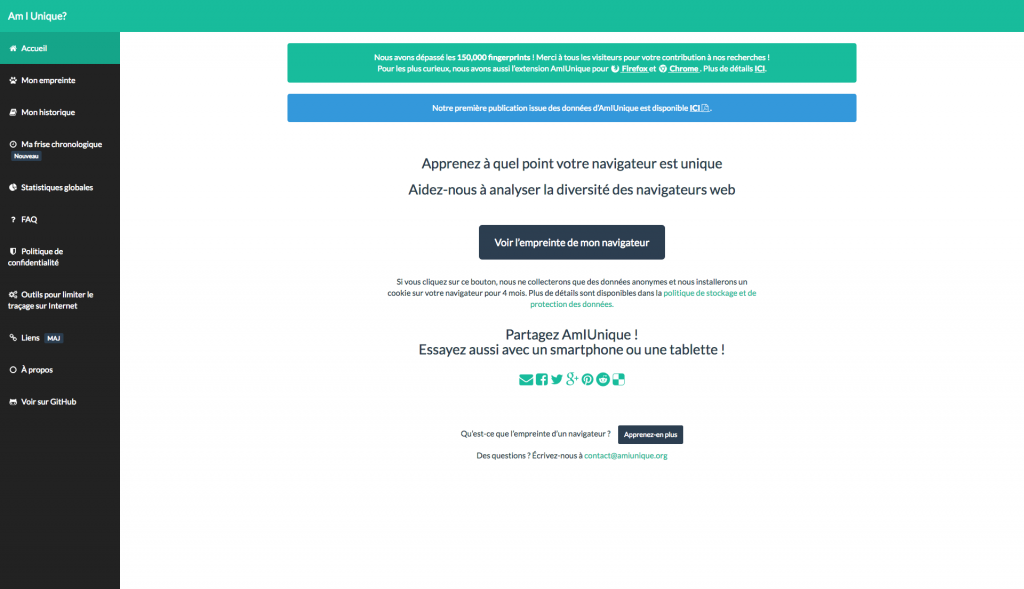

Does your smartphone use Google, Apple or Microsoft software? When you use a Google Play App Store application you are asked to grant « authorisations » to these additional programmes. This is a crucial step in the download and setup process: by accepting them you may well give access to your telephone’s geolocalisation, contacts, photos, camera and unique identifier number data.

These authorisations do not simply provide you with useful features. Their main aim is to collect data on how you use the, often free, application.

Your data is then transferred to the application operator’s computers (often hosted in another country): the « cloud ». The data is collected for many years and, together with data from millions of other people, is analysed using high-performance, statistics and mathematical tools. It is a huge and genuine cybersecurity issue.

Maryline Boizard points out: “Especially personal data which is cross-checked and aggregated from a number of sources. This gradually creates a very clear user profile.”

This profile is even more personal and revealing given that it combines important, private data: your social network friends, your heart rate or the number of daily steps you take if you wear a connected fitness sensor, how you drive and your preferred routes if your car has a GPS.

“If we consider that profiling is an intrusion to user privacy, then this could lead to situations of abuse” says Maryline Boizard.

Today, for example, insurance companies are highly interested in connected personal objects and connected vehicles. Some insurance companies already offer bonuses or free connected objects to those customers who accept to share their data. These companies currently reward careful driving and regular exercise. What, we might ask, will they reward in the future? It seems that we are on the brink of being subjected to differentiated insurance bonuses based on mandatory insuree behaviour monitoring.

Economic model and use of personal data

The « totally free with ads » option is now the internet norm. If online services are to increase their users, they have to be, at least in terms of their basic version, interesting and free. Online service operators, however, have to make a profit from said services and they mostly do so through user- targeted advertising.

User profiles: a veritable goldmine for online operators

From an investor point of view, profile portfolios are the real value of any online business. Even if we benefit from a free application, in reality we pay for it as our personal data is shared with the application’s owners. As the saying goes, « If you’re not paying for it, you become the product ». This economic model dates back to the beginnings of commercial internet.

Imbalance

For Maryline Boizard: “A major legal concern is that some applications provide a minimal service in return for a huge amount of collected data.”

There are « flashlight » applications which, by pressing a button, permanently activate a smartphone’s flash. This is indeed useful indeed if you are in the dark. But some of these applications request access to telephone contacts, device ID and even geolocalisation. In this example, the ratio between services rendered and the cost of providing personal data seems imbalanced.

However, there is no applicable legal text to regulate this imbalance. Lawyers have a vast amount of work ahead in terms of improving user protection measures.

The right to be forgotten

The « right to be forgotten », created as an attempt to set the balance straight, is a battling ground between lawyers and internet giants. For example, do internet users have the legitimate right to remove all their information found via Google search? The CNIL (National Commission on Informatics and Liberty) is of this opinion and sentenced Google to a €100,000 fine in March 2016. You may recall that Google accepted to delete some of its research results which could have prejudiced internet users: the search engine now provides a right-to-be-forgotten form. However, the data was only deleted on the French Google platform and its other sites, but on the condition that the request came from France. An internet user in America can still access the deleted results. Google appealed the CNIL’s verdict and argued that French law cannot be applied overseas. To be followed…

Over and above the right to be forgotten is the ever-present question of profiling. If we could completely delete our online trace, and all access to our personal information, our data would have guaranteed limited commercial use. But this is not the case today, as, by ticking the « I agree to the general terms and conditions » box, we digitally sign an irreversible disposal agreement for an unspecified duration.

Manage profiling

Maryline Boizard is currently working on a profiling research project with IRISA (Institut de recherche en informatique et systèmes aléatoires – joint research centre for Informatics, including Robotics and Image and Signal Processing) computer engineers. The project was launched in June 2016.

The lawyers involved in this project are working to provide an overview of the current legislation in order to gather current user protection information. The researchers will then check if new, planned measures are legally and technically adequate: there is no point in legal protection being available to individuals if it cannot be technically implemented.

For example, there is a law which states that individuals may maintain full control over their private data (this is known as digital self-determination). Can this law be enforced on the operator? In other words, can internet users request that all their data be deleted from a social network, for example? If so, how can this be guaranteed, given that today, data can only be fully controlled if it remains stored on one single computer? The legislator will be able to impose cut-off rules even if they are difficult to guarantee.

Simplify general terms

An experience has just been carried out in Norway during which the general terms and conditions of 33 of the country’s most common smartphone applications were read out loud. The combined documents were longer than the New Testament and it took the brave volunteers more than 30 hours to read to the end!

However, digital economy development is a question of trust: the general terms and conditions need to be simplified and harmonized across the globe. In this way, users will be correctly informed as to how their data will be used and can therefore accept the conditions in full knowledge of the facts.

Create a protection tool

“Sociologist Catherine Lejealle’s research on internet users at the ESG Management School in Paris reveals an element of fatalism”, says Maryline Boizard. “As we are used to the prevailing internet economic model – where we pay for a small service in exchange for a large amount of our personal data – we take it to be a given. The right to forget protection measures should therefore be as simple as possible and easy to implement.”

Another internet?

Are there other solutions? Some people feel that a totally different internet economic model is called for: services should be purchased in exchange for guaranteed user-data confidentiality. The inconvenience is that only those with the financial wherewithal would be protected and this would breach the principle of Net Neutrality.

Maryline Boizard concludes that: “Everyone must pitch in equally if we are to change the current model to create a model for all.”

Links :

Maryline Boizard

Maryline Boizard is a private law lecturer at the Université de Rennes 1 and an Institut de l’Ouest – Droit et Europe (IODE – Western Institute of European Law) researcher. In March 2015, this joint research unit organised a

scientific symposium on the right to be forgotten. Maryline Boizard’s work focuses mainly on economic operator civil liability, the right to be forgotten and citizens’ basic rights and liberties in terms of cyber activities.

The lecturer-researcher has launched cross-curricular user-profiling research projects with the IRISA, DRUID, DIVERSE and CIDRE teams.

Maryline Boizard’s personal research focuses on Internet intermediary liability, especially for research engine suppliers.